Code Can't Be Fired: Why Blaming AI is the Ultimate Corporate Cop-Out.

It is "Who" is responsible and accountable, not "What"..

Watching the rain hit the windows of the train from Spain to Paris, while sipping my third espresso of the day wondering about something that is bugging me today after reading some timeline slop…

The Myth of the “Deciding” AI

We are living in an era where every piece of software is suddenly branded as “smart”…

From your smart thermostat to massive enterprise LLMs, the marketing pitch is constantly implying that these tools are making decisions for us. But here is the hard, uncompromising truth:

Tools don’t make decisions.

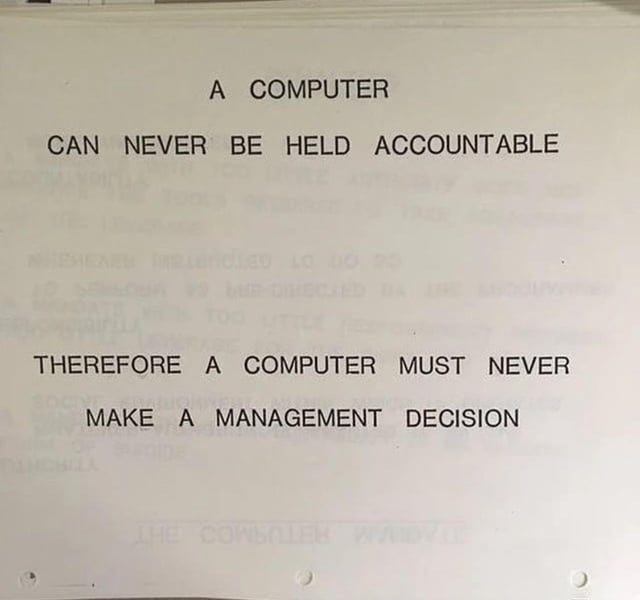

Going to steal a popular meme here, but here it goes: Back in 1979, an internal IBM training slide stated something profound: “A computer can never be held accountable, therefore a computer must never make a management decision”.

Nearly fifty years later, that logic is still completely solid. Yet, I see companies acting like this foundational rule suddenly expired just because we have better GPUs. We need to untangle two concepts that get thrown into the blender way too often: responsibility and accountability.

Responsibility vs. Accountability

In the engineering world, we like clean definitions. Here is the breakdown:

Responsibility is about execution. It’s the engine doing the manual labor. If you need to parse 1.500.000 rows of log data in 0,2 seconds, you give that responsibility to a script or an AI model. It does the heavy lifting.

Accountability is about ownership. It’s about who takes the hit when the server crashes, the data gets leaked or the company loses money.

A piece of code cannot be fired. An algorithm cannot go to jail. An AI model doesn’t care if a project tanks or if it outputs a completely fabricated legal precedent. Because a tool cannot be accountable, it inherently cannot make a decision. A decision carries weight, context and consequence. What the AI actually does is compute a statistical probability and generate an output based on its training. The decision is what you, the operator, choose to do with that output (including to automate that).

The Ultimate Cover-Up

Lately, “The algorithm did it” has become the modern corporate equivalent of “The dog ate my homework” (I tend to say this a lot lately for a lot of things)…

When an automated HR system filters out perfectly good candidates or a dynamic pricing algorithm artificially inflates the cost of a flight to 2.500,00 euros, the immediate PR response is often to blame the tech. It’s a highly convenient smokescreen...

Blaming the tool is just a “polite” way to cover up:

Mismanagement: Throwing shiny tech at a fundamentally broken business process and hoping it fixes the culture.

Operational Deficiency: Failing to implement proper safeguards, error-handling or hooman-in-the-loop protocols.

Lack of Knowledge: Buying an expensive enterprise AI suite without understanding its parameters, biases or technical limitations.

If your system fails, it’s not because the AI was “bad”. It’s because the operator deployed a tool they didn’t fully understand, in an environment that wasn’t ready for it, without bothering to check the output.

The “Scale” Trap: When the Hooman Drowns

The very first step of the process: selecting the tool itself. Accountability doesn’t just start when the AI spits out a result; it starts the moment you sign the procurement contract for that shiny new system…

Here is the classic scenario. Management buys a high-throughput AI tool to handle, let’s say, network security alerts or customer support triage. They boldly implement a “Hooman in the Loop” policy to feel secure. In theory, perfectly responsible…

But in practice? The machine is churning out 15.000 flags or drafts a day, and there are exactly three hoomans assigned to review them…

You don’t need a PhD in queueing theory to see the bottleneck here. It is like trying to bike through the Leidseplein on a Friday night, complete gridlock. When the hooman in the loop cannot physically keep up with the load the machine generates, something dangerous happens: automation complacency…

Those reviewers get exhausted. They get alert fatigue. Instead of rigorously checking the AI’s math, they start mindlessly clicking “Approve” just to clear the queue. They wave goodbye to their responsibility and accountability altogether, simply because the sheer volume makes critical thinking impossible...

And this is where the situation gets infinitely worse than having no AI at all. Now, you don’t just have a machine making unsupervised decisions; you have a machine making unsupervised decisions with the official rubber-stamp of hooman approval. It gives a massive illusion of safety while actively destroying it.

If the system approves a fraudulent transaction of 50.000,00 euros because the reviewer was too swamped to actually look at it, you can’t blame the reviewer for being slow, and you definitely can’t blame the AI for doing exactly what you bought it to do…

The accountability failure happened on day one. Selecting a tool that operates at a scale of 1.000, while your hooman oversight capacity maxes out at 10,0, is a fundamental architectural failure. If you buy a Formula 1 car but only hire a bicycle mechanic to maintain it, don’t act shocked when the wheels fall off at 300 km/h…

You have to scale your hooman oversight logically with your automated throughput. If you can’t afford the hooman bandwidth to properly review the machine’s output, you simply can’t afford the machine. It is a solid rule of thumb…

Who (not what) is responsible and accountable in this context? Do you feel it yet? :-).

Doing It Right: Enter Critical Thinking

I know… I am repeating myself a lot on this… But, how do we navigate this without becoming “luddites” who refuse to use modern tools? It requires a level-headed approach, a healthy dose of common sense and, dare I say it: critical thinking…

Here are a few examples on how to keep the accountability exactly where it belongs:

Treat AI like a brilliant junior developer: If you hire a smart but inexperienced junior coder, you let them write the boilerplate code, but you always review their pull requests before pushing anything to production. You don’t blindly trust their output because they lack architectural wisdom. Apply the exact same logic to your tech stack. It’s a tool to accelerate your workflow, not a replacement for your senior expertise.

Define the “Hooman in the Loop”: For any automated system that impacts real people or real money, there must be a defined hooman checkpoint. Let’s say you’re using an AI tool to draft medical diagnostic summaries. The AI can highlight anomalies in a dataset in 0,5 seconds, which is incredibly efficient. But the doctor signs off on the final diagnosis. If the diagnosis is wrong, the doctor is accountable, not the software vendor.

Audit your inputs and outputs: If your dataset is garbage, your output is garbage. If you use an algorithm to optimize supply chain routes and it accidentally reroutes 5.000 delivery trucks into a flooded zone, the failure wasn’t the AI being malicious. The failure was the operator not validating real-time environmental constraints within the model’s parameters.

Wild and Powerful

Tools are getting wildly powerful and as a geek, I think that’s a beautiful thing. I love pushing the limits of what my hardware and software can do. But the more powerful the tool, the heavier the accountability on the operator holding it. Keep your system specs high, but keep your standards for hooman oversight higher.

Anyway, my coffee has officially gone cold… Keep building smart things, folks, just remember who is actually steering the ship… The captain… You…

The C-suite want to blame AI so they don't have to admit negligence. I condemn them for it. But I empathize with the worker who is going to be blamed for erroneous outputs after they were forced to use AI, even against common sense and pushback. AI mandates are a scourge on organizations.